March 24, 2026 - by Pritesh Thakor

What happens when your CEO asks for real-time performance visibility across all regions before a board meeting?

In many enterprises, that request triggers a familiar scramble — exporting data from multiple ERP systems, reconciling conflicting numbers from regional databases, and manually adjusting spreadsheets well into the night. By the time the insights are presented, the data is already outdated — and no one is completely confident in the numbers.

Such scenarios are becoming increasingly common because many companies continue to rely on traditional on-premises data warehouses built for predictable, batch-driven reporting – not real-time analytics, global data integration, or AI-powered forecasting.

As data volumes surge and business expectations accelerate, Google Cloud Platform (GCP) helps address these challenges by providing a serverless, high-performance foundation for enterprise-grade data warehousing. Read further to uncover how, by leveraging GCP’s fully managed ecosystem, organizations can modernize their data architecture to enhance operational agility, scalability, and cost-efficiency.

Why Modern Data Warehouses Need Google Cloud Platform (GCP)

Traditional on-premises data warehouses were engineered for the predictable workloads and periodic reporting cycles of a previous era. These legacy environments impose rigid operational constraints that hinder business growth, whereas cloud-native solutions provide the agility required to compete in a data-driven economy.

Today’s enterprises operate in a world of continuous data streams from ERPs, CRMs, SaaS platforms, and IoT devices, with executives demanding near real-time dashboards every hour. Real-time intelligence and seamless scalability have become the need of the hour to manage the exponential growth of data across the business.

Cloud-native platforms like GCP eliminate these constraints by offering elasticity, automation, and integrated intelligence — turning the data warehouse from a cost center into a strategic growth engine. Here’s a comparative analysis of legacy on-premises data warehouses vs. Google Cloud Platform (GCP)

| Evaluation Criteria | Legacy On-premises Systems | Google Cloud Platform (GCP) |

|---|---|---|

| Scalability | Static: Requires physical hardware procurement; scaling is a months-long process. | Elastic: Near-instant, automated scaling of compute and storage to meet demand. |

| Operational Effort | High Overhead: Extensive manual effort for patching, tuning, and capacity planning. | Serverless: Fully managed services (like BigQuery) eliminate infrastructure management. |

| Cost Model | CapEx-heavy: High upfront investment with significant ongoing maintenance costs. | OpEx-Optimized: Pay-as-you-go consumption; aligns costs directly with business value. |

| Data Diversity | Siloed/Structured: Optimized for relational data; struggles with semi-structured formats. | Unified: Natively handles structured, semi-structured (JSON), and unstructured data. |

| Time-to-Insight | Delayed: Dependent on rigid batch processing and long ETL cycles. | Real-Time: Supports high-velocity streaming and sub-second query performance. |

| Intelligence | Manual Integration: Requires complex add-ons for AI and Machine Learning. | Native AI: Seamless access to Vertex AI for predictive modeling and insights. |

Core GCP Services for Building a Modern Data Warehouse

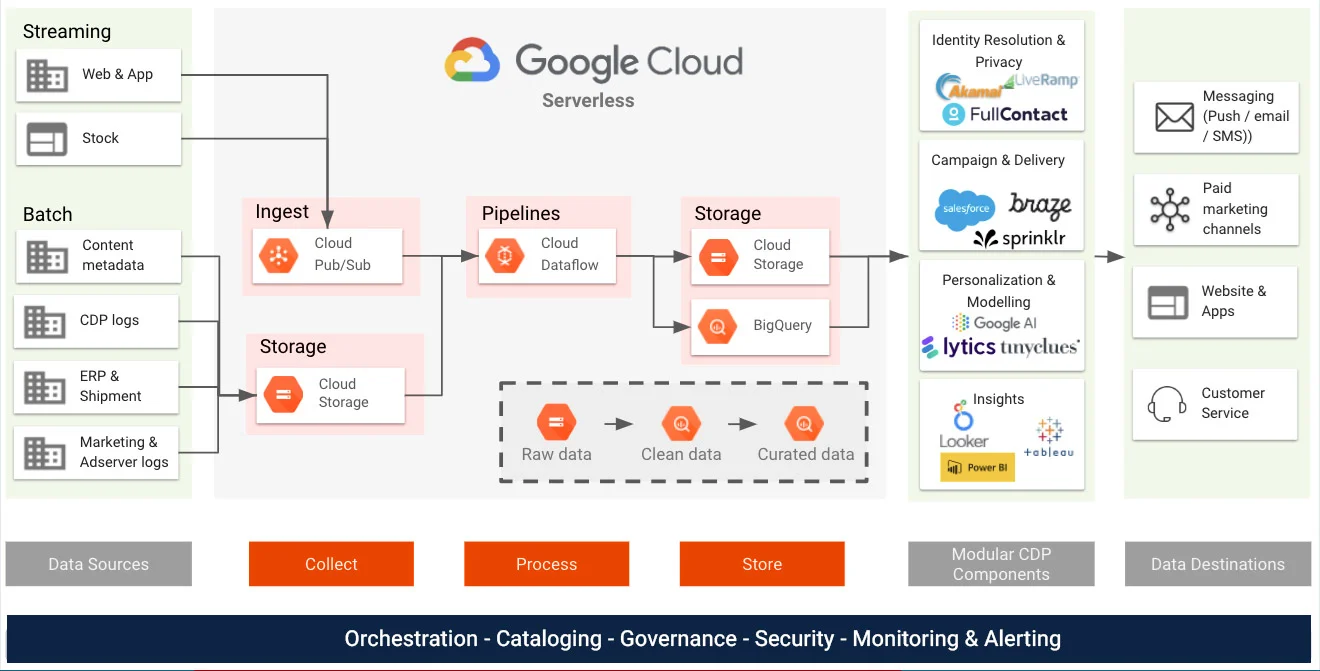

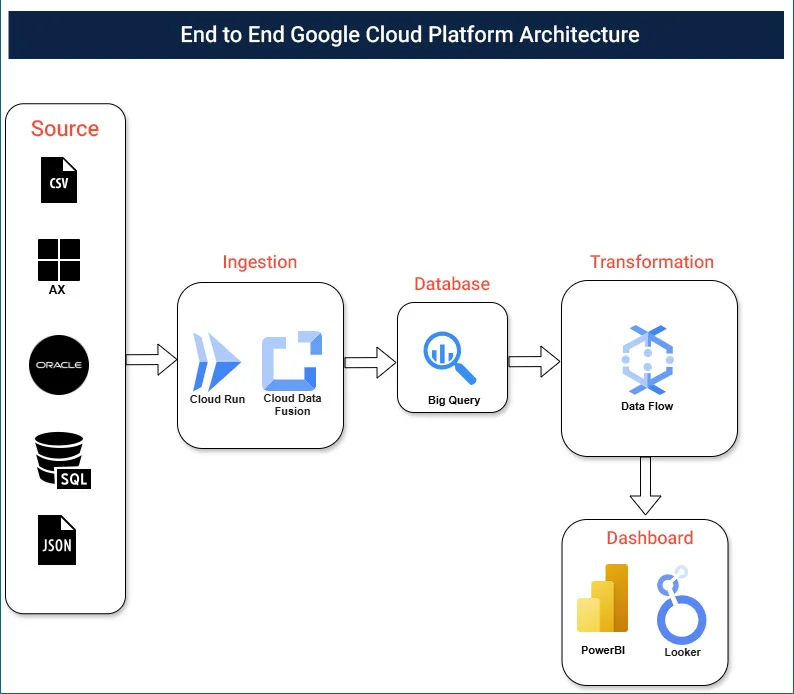

A modern data warehouse on Google Cloud Platform (GCP) is built using a layered and scalable architecture. This approach makes it easier to manage growing data, improve performance, and maintain strong security, all while keeping costs under control.

Below is a simplified and refined view of how architecture works and which GCP services support each layer.

1. Ingestion (Collecting Data)

This layer is responsible for collecting and importing data from different sources into Google Cloud.

- App Engine – Run applications that generate and send data to the cloud.

- Compute Engine – Virtual machines that host applications and workloads.

- Cloud Functions – Event-driven serverless functions for lightweight data processing.

- Cloud Run – Run containerized applications without managing servers.

- Pub/Sub – Real-time messaging and event streaming service.

- Cloud Storage – Stores raw structured and unstructured data. Acts as a landing zone for incoming files

2. Storage (Storing Data)

This layer stores structured and unstructured data securely and reliably.

- Cloud Storage – Scalable object storage for files, backups, and raw data.

- Cloud SQL – Managed relational database service.

- Cloud Bigtable – High-performance NoSQL database for large workloads.

- BigQuery – Serverless enterprise data warehouse.

3. Analysis (Transforming and Analyzing Data)

This layer prepares data for reporting, analytics, and machine learning.

- Dataflow – Serverless batch and streaming data processing.

- Dataproc – Managed Spark and Hadoop for big data workloads.

- Cloud Composer – Workflow orchestration using Apache Airflow.

- BigQuery – Perform high-speed SQL analytics on large datasets.

- Dataprep – Clean and prepare data visually.

4. Visualization (Enabling Business Insights)

This layer helps users explore data and create reports and dashboards.

- Looker – Enterprise BI platform with governed metrics.

- Cloud Datalab – Interactive notebook for data analysis.

- Looker Studio (formerly Data Studio) – Self-service dashboarding tool.

- BigQuery – Direct SQL-based data exploration.

- Google Sheets – Connect and analyze cloud data in spreadsheets.

- Data Catalog – Centralized data discovery and metadata management.

Real-World Success: Data Warehouse Modernization for a Global Manufacturing Leader

Client Challenge: Decentralized Data Landscape

As a global manufacturing leader with over 300 subsidiaries, the client faced significant challenges managing a massive, decentralized data landscape. Legacy on-premises systems created bottlenecks, including fragmented data silos (SQL, Oracle, and JSON), high maintenance costs, and slow reporting cycles that hindered executive decision-making.

To resolve this, the company sought to migrate to Google Cloud Platform (GCP), shifting from manual infrastructure management to an automated, AI-ready data strategy.

The Solution: A Unified GCP Architecture

To address these challenges, implemented a modern, layered data architecture:

A. Centralized Data Warehouse: BigQuery

- BigQuery was deployed as the core analytics engine.

- It consolidated data from Oracle, SQL, and JSON sources into a single, petabyte-scale environment.

- Its serverless nature allowed Nidec business user to run complex queries across global datasets without managing hardware.

B. Modern ELT Orchestration

- Cloud Data Fusion: Used for codeless data integration, allowing teams to quickly build pipelines from legacy Oracle and SQL databases.

- Cloud Run: Utilized for lightweight, containerized microservices to handle custom data transformations and JSON parsing at scale, ensuring an “ELT” (Extract, Load, Transform) approach that preserves raw data integrity.

C. Advanced Reporting & Visualization

- Looker / Power BI: Nidec business user integrated Looker for governed, enterprise-wide semantic modeling and Power BI for self-service executive dashboards. This hybrid approach ensured that all departments used the same KPI definitions.

Measurable Outcomes

The migration to GCP had an immediate and significant business impact:

| Key Metric | Achievement |

|---|---|

| Reporting Speed | 65% Faster executive and month-end reporting cycles. |

| Cost Efficiency | 35% Reduction in total operational and infrastructure costs. |

| Productivity | 40% Reduction in manual data preparation and entry effort. |

Conclusion

Data warehouse modernization is not just a technology refresh — it is an architectural transformation that determines how quickly and confidently your enterprise can act on data.

By leveraging cloud-native capabilities like BigQuery, auto-scaling infrastructure, and integrated analytics, organizations can accelerate report cycles, reduce operational costs, and deliver near real-time insights that drive smarter decision-making.

The question is no longer whether to modernize, but how quickly your architecture can evolve to support AI-ready, real-time decision-making.

If you want to enjoy clear cost savings, better performance, and strong business value, embracing GCP can propel your organization for future growth and AI-driven innovation. Connect with our experts to assess your architecture and build a scalable, future-ready data foundation.

About the Author

Pritesh Thakor is a Project Lead at Synoptek, specializing in Business Intelligence (BI) and Google Cloud Platform (GCP) solutions. He plays a key role in defining enterprise data architecture strategy and roadmap on Google Cloud Platform, designing scalable solutions across ingestion, storage, processing, and analytics layers. Pritesh is well-versed in Google BigQuery, Google Cloud Storage, Google Cloud Dataflow, Google Cloud Fusion, and Google Cloud Composer, enabling the creation of high-performance, cost-efficient, and reliable data platforms.

Frequently Asked Questions

Modern data warehouse solutions offer several advantages:

- Elastic scalability with pay-as-you-go pricing

- Real-time data ingestion and analytics

- Reduced operational overhead with serverless infrastructure

- Native AI and machine learning integration

- Unified handling of structured, semi-structured, and unstructured data

Before initiating data warehouse modernization, organizations should evaluate:

- Current data sources and integration complexity

- Performance bottlenecks and reporting delays

- Security and compliance requirements

- Scalability needs for future growth

- Alignment with AI and advanced analytics goals